I Built Skills Because My Notes App Was Full of Dead Prompts

Open your Notes app right now. Search "prompt."

Count what comes up.

If you're anything like the 200+ developers I've talked to in the last six months, the answer is somewhere between "more than I want to admit" and "oh god I forgot about that one."

You have a folder of prompts that work. The one that writes your release notes the way your team actually wants them. The one that turns a messy PR into a clean changelog entry. The one that drafts customer emails in your tone. The standup summarizer. The weekly recap generator. The "explain this code in plain English" template that takes you 12 tries to get right and then lives forever in a screenshot.

None of them run.

They're just text. Pasted into ChatGPT every time you need them. Copy. Paste. Edit. Paste. Edit again. Wait for output. Copy the output. Paste it where it actually needs to go.

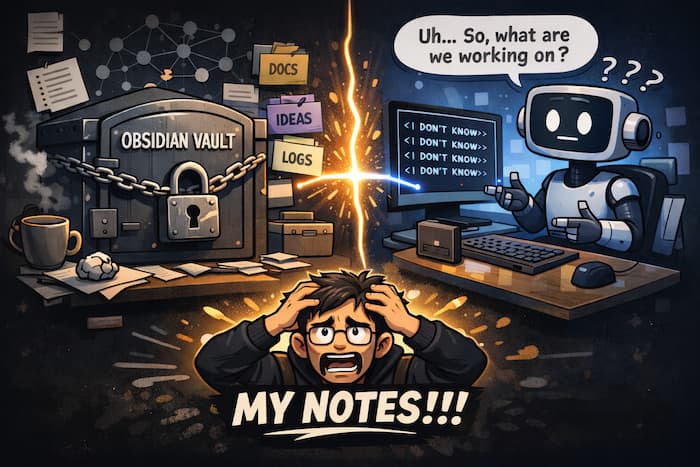

This is the prompt graveyard, and you're not the only one who has one.

The Hidden Cost Of "I'll Just Paste It Again"

Here's the cost nobody talks about.

Every time you re-type or re-paste a prompt, something drifts. You change a word. You forget a constraint. You leave out the example you added last time that made the output good. You think you're using "the prompt that works" — but you're actually using "the prompt that worked the first time, three weeks ago, before I tweaked it twice."

By the time you've used a good prompt ten times, you've used ten slightly different prompts. The output quality wobbles. You start blaming the model. "GPT-5 is getting worse," you say. The model didn't get worse. Your prompt did, and you didn't notice because there was no version of it that was actually fixed in place.

This is the same problem we used to have with shell scripts before we put them in a repo. The same problem we had with email templates before tools like Front and Mixmax. The same problem we had with code snippets before Gist existed.

The work was real. The artifact was real. The home for the artifact was a chat history and a Notes app — neither of which were built to be the home for it.

The Other Cost: Context Lives Where The Work Lives

There's a deeper problem.

The prompts you'd want to save aren't generic. They're project-specific. The release note prompt is good because it knows your product's voice. The PR summarizer is good because it knows your codebase's conventions. The customer reply template is good because it references your refund policy.

When that prompt lives in your Notes app, it lives away from the project. Your teammate can't find it. Your future self can't find it. Six months from now when you need it, you'll search Slack DMs and ChatGPT history for forty minutes and rewrite it from scratch because that's faster than the search.

The prompts that are most valuable are the ones most tied to a specific project. And those are exactly the ones that have nowhere to live.

What A Real Home For Prompts Looks Like

If we built it from scratch, knowing what we know now, what would it look like?

A prompt would live inside the project it belongs to, the same way a README does.

It would have a name and a description so you and your team can find it.

It would be a template, not a paste — with placeholders you fill in at run time.

It would have version history so the prompt that worked last week doesn't quietly become a different prompt this week.

It would run on your own LLM key so the output stays yours and the cost goes through your account, not someone else's.

It would keep a log of every run — input, output, tokens, cost — so you can actually see whether your prompt is getting better or worse over time.

It would be callable from your AI agent so Claude or Cursor or Copilot can run it for you without you having to copy-paste yet again.

This is the shape of the thing. We just shipped it.

Meet Skills

Skills are reusable AI prompts that live inside one of your ContextForge projects.

Each Skill has:

A name and description — visible in your project's Skills tab

A prompt body with

{{variables}}you substitute at run timeA provider and model — Anthropic Claude or OpenAI GPT, your pick

An optional save target — auto-save each run's output to a knowledge space

An execution history — every run, with input, output, tokens, cost, and timestamp

You write the prompt once. You name it. You run it from the dashboard with one click, or from Claude via MCP with one sentence. You stop pasting.

Here's what one of my own Skills looks like.

Name: Feature Announcement Pack

Provider: OpenAI / gpt-5-mini

Body:

You are a developer-marketing assistant for ContextForge Memory.

A new feature just shipped:

FEATURE: {{feature}}

WHAT IT DOES: {{what_it_does}}

WHO BENEFITS: {{audience}}

Produce 4 outputs in this exact order:

## 1. Tweet — under 280 chars, one emoji, developer voice

## 2. LinkedIn post — 3 paragraphs, confident but humble

## 3. Changelog entry — Title / Summary / What's new / Why it matters

## 4. Docs blurb — 2 paragraphs, plain English, no marketing fluff

When I ship a new feature, I open the Skill, paste this into the input panel:

{

"feature": "Skills",

"what_it_does": "Save reusable AI prompts as named, versioned templates inside a project.",

"audience": "developers tired of re-typing prompts"

}

Click Execute. Five seconds later I have a tweet, a LinkedIn post, a changelog entry, and a docs blurb — all in my voice, all consistent with last week's announcement, all auto-saved to my Content Marketing space for review.

Here's me building and running this exact Skill in under 3 minutes:

Next week, when I ship the next feature, the only thing that changes is the JSON.

BYOK, Audit Log, And Why That Matters

Two things people ask first:

"Does ContextForge see my prompts and outputs?"

Your prompts and outputs live in your project. They're scoped by RLS to your organization. Nobody else can read them. We don't model-train on them. We don't store them off your row.

"Whose LLM key is being used?"

Yours. You add your Anthropic or OpenAI key to your project's settings, and Skills calls your account directly. We don't mark up tokens. We don't have a "ContextForge LLM credits" SKU. The dashboard shows the estimated cost of every run in USD so you can keep an eye on spend, but the bill goes from OpenAI/Anthropic to you, not through us.

The audit log is the third piece. Every run is recorded — successful or failed — with tokens, cost, the exact input params, and the model's output. You can click any row to expand it and see exactly what went in and what came out. If a Skill starts producing worse output, you can compare runs side by side and see where it drifted.

This is the part that's hard to do on your own. Even if you save your prompts in a Notion doc, you don't have a record of every time you ran them and what came out. That record is what turns prompts from craft into engineering.

The MCP Angle

There's one more piece, and it's the part that closes the loop.

Skills are exposed as MCP tools. That means Claude itself can run your Skills. Six tools are shipped: skills_list, skills_get, skills_create, skills_update, skills_run, skills_delete.

In practice, that looks like this:

"Run my Feature Announcement Pack skill for feature 'Routines' with what_it_does='schedule any Skill on a cron expression' and audience='devs who want cron for AI'"

Claude calls skills_run, the LLM does the work against your key, the run is logged in the same audit log as your dashboard runs, and the output comes back to your conversation.

You stop pasting. You stop re-typing. You start composing.

What This Replaces

If you've been using:

A Notes app for prompts → Skills replaces it. Versioned, executable, findable.

ChatGPT custom GPTs for personal prompts → Skills is the project-scoped, BYOK, no-vendor-lock version.

A bookmarked Claude conversation → Skills runs the same prompt without you having to open that conversation and scroll.

A doc full of "useful prompts" that nobody on your team actually opens → Skills lives next to the project, in the tool the team is already in.

Try It

If you've got a prompt you've been pasting into ChatGPT this week — the daily standup one, the customer reply one, the PR summarizer, the one you whisper to Claude every Monday morning — that prompt has a home now.

Open ContextForge → pick a project → Skills

Click New Skill

Paste the prompt. Replace your variable parts with

{{placeholders}}.Run it.

The prompt graveyard ends today.

Share this article